Hi there! 👋 Welcome to YupengSu’s Homepage.

I am a Ph.D. student in Computer Science at the University of California, Santa Barbara (UCSB), advised by Prof. Zheng Zhang. I previously received my B.E. degree in Microelectronics from the Southern University of Science and Technology (SUSTech), advised by Prof. Hao Yu.

My research focuses on efficient pre-training and post-training optimization for large language models (LLMs), particularly quantization, sparsification, and low-rank decomposition for improving training and inference efficiency. I am also interested in high-performance computing and AI accelerators, including compiler optimization and GPU/FPGA kernel adaptation for efficient and energy-aware deployment. I have published and submitted papers with total

My experience spans the end-to-end lifecycle of large language models (LLMs) — including pre-training (from scratch and continued pre-training), fine-tuning, post-training optimization (quantization, pruning, knowledge distillation, and low-rank decomposition), and efficient deployment on edge devices.

Beyond algorithmic optimization, I bridge the gap between machine learning and hardware, with hands-on experience in Python, C++, and Verilog HDL, as well as FPGA prototyping, digital front-end design, and compiler co-design for edge AI systems.

My personal CV is attached here. If you are interested in my research or have any questions, also please feel free to contact me at yupengsu06@gmail.com or yupengsu@ucsb.edu.

🔥 News

- 2025.02 : 🎉 Our paper LLM-Barber is accepted by IEEE/ACM ICCAD 2025.

- 2025.02 : 🎉 Our paper EdgeLLM is accepted by IEEE TCAS I: Regular Papers.

- 2025.02 : I have been admitted to the PhD program at University of California, Santa Barbara (UCSB)!

- 2024.09 : I join HKU Next Gen AI(NGai) Lab as Student RA and collaborate with PloyU Hongxia Yang’s Lab!

- 2024.02 : 🎉 Our paper APTQ is accepted by IEEE/ACM DAC 2024.

📝 Publications

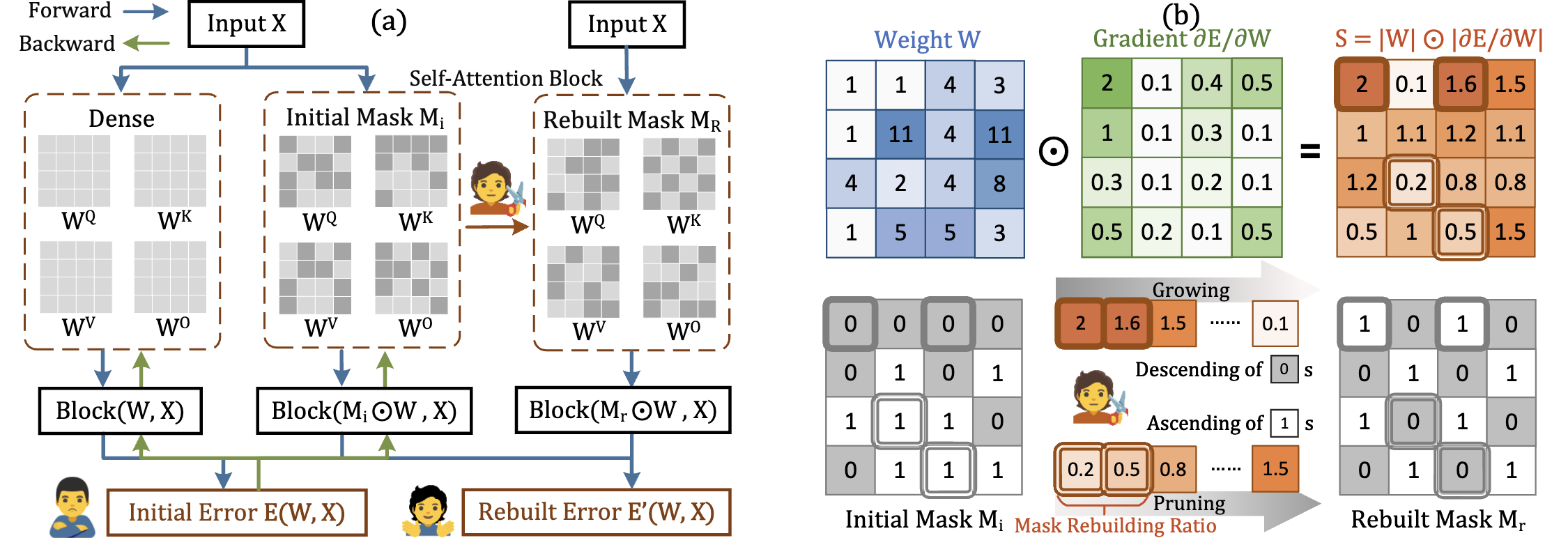

LLM-Barber: Block-Aware Rebuilder for Sparsity Mask in One-Shot for Large Language Models

Yupeng Su, Ziyi Guan, Xiaoqun Liu, Tianlai Jin, Dongkuan Wu, Graziano Chesi, Ngai Wong, Hao Yu

- LLM-Barber incorporates block-aware error optimization and introduces an innovative pruning metric with weights multiplied by gradients.

- LLM-Barber not only supports unstructured pruning but also structured pruning such as N:M pattern, which is more suitable for hardware acceleration.

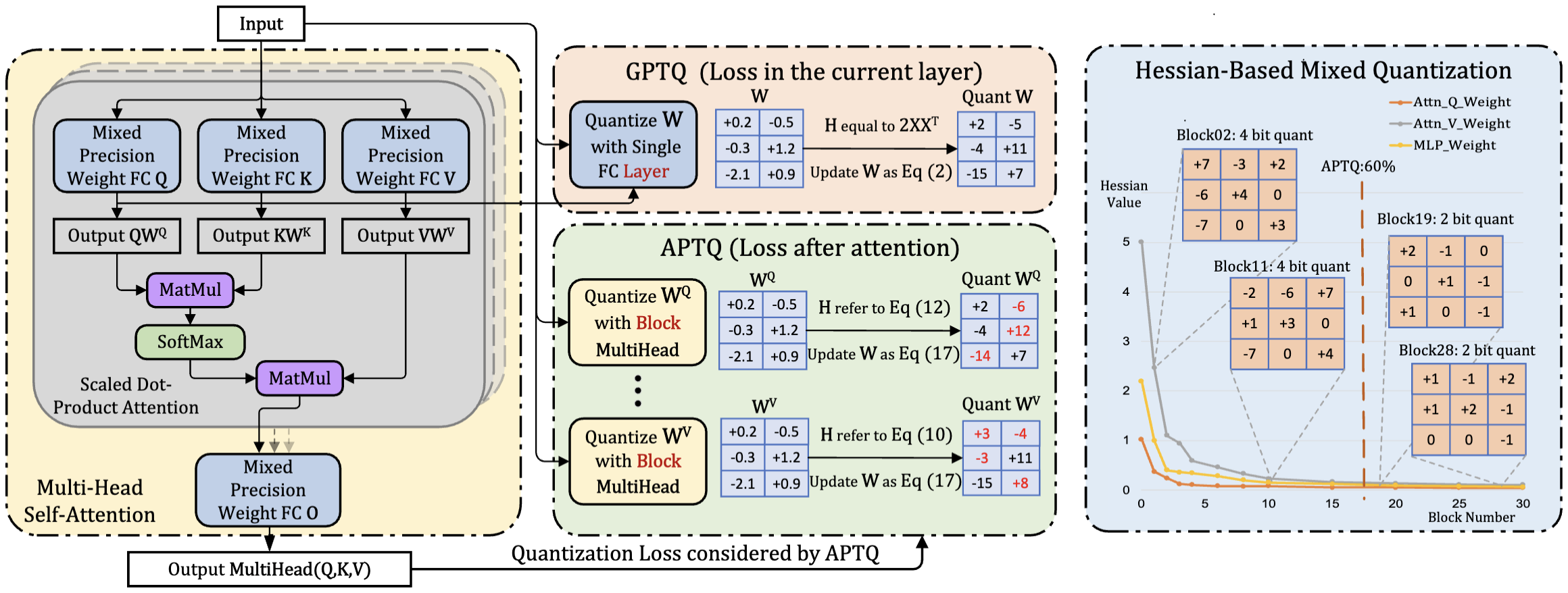

APTQ: Attention-aware Post-Training Mixed-Precision Quantization for Large Language Models

Ziyi Guan, Hantao Huang, Yupeng Su, Hong Huang, Ngai Wong, Hao Yu

- APTQ is the first approach to consider self-attention architecture during post-training quantization and conbine mix-precision by Hessian Matrix.

TCAS IEdgeLLM: A Highly Efficient CPU-FPGA Heterogeneous Edge Accelerator for Large Language Models, Mingqiang Huang, Ao Shen, Kai Li, Haoxiang Peng, Boyu Li, Yupeng Su, Hao Yu.ARXIVQuantization Meets Reasoning: Exploring LLM Low-Bit Quantization Degradation for Mathematical Reasoning, Zhen Li, Yupeng Su, Runming Yang, Congkai Xie, Zheng Wang, Zhongwei Xie, Ngai Wong, Hongxia Yang.ARXIVPTQTP: Post-Training Quantization to Trit-Planes for Large Language Models, He Xiao, Runming Yang, Qingyao Yang, Wendong Xu, Zhen Li, Yupeng Su, Zhengwu Liu, Hongxia Yang, Ngai Wong.

🛠 Projects

Hisilicon Embedded Competition(Second Prize) HiBao: Your Artificial Intelligent Voice Assistant, Yupeng Su, Guanqi Peng, Jiaqi Yang.SME309 Microprocessor DesignARM Processor Designed with Verilog, Yupeng Su.CS205 Cpp Program DesignLibtensor Designed with C++, Yupeng Su, Xiaoqun Liu, Zexin Feng.

📖 Educations

- 2025.09 – present, Ph.D. in Computer Science, University of California, Santa Barbara (UCSB).

- 2021.09 – 2025.07, B.Eng. in Microelectronics, Southern University of Science and Technology (SUSTech).

- 2018.09 – 2021.06, High School Student, The First High School of Changsha, Hunan.

💻 Internships

- 2024.11 – 2025.05, Student Research Assistant, Prof. Hongxia Yang’s Lab, The Hong Kong Polytechnic University (PolyU).

- 2024.08 – 2025.02, Student Research Assistant, Next Gen AI (NGai) Lab, The University of Hong Kong (HKU).

- 2024.06 – 2024.09, Summer Intern, High Performance Integrated Circuit Design Lab, Southern University of Science and Technology (SUSTech).

🎖 Honors and Awards

- 2025.07, Nominee of Top Ten Graduates, Southern University of Science and Technology.

- 2025.07, Guo Xie Birong Scholarship for Academic Excellence, Southern University of Science and Technology.

- 2025.06, Top Ten Graduates of the College of Engineering, Southern University of Science and Technology.

- 2022–2024, First-Class Merit Student Scholarship (Three Consecutive Years), Southern University of Science and Technology.

- 2023.12, Future Star, Ministry of Education – Huawei Smart Base Project.

📚 Student Works

- 2023.09 - 2025.06, Peer Mentor for Academic Advisory Program, Student Affairs Department of SUSTech.

- 2022.09 - 2025.06, Instructor for Undergraduate Course Calculus I/II, Student Development Center of SUSTech. Repo Link